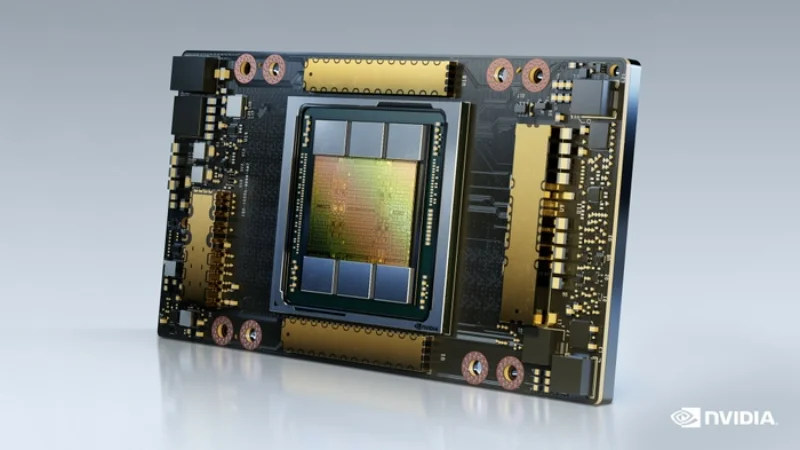

NVIDIA A100 40GB

Entry-level data center GPU for AI training and inference

VRAM

40 GB

Bandwidth

1.6 TB/s

FP16

624 TFLOPS

TDP

400W (SXM) / 250W (PCIe)

Technical Specifications

| VRAM | 40 GB HBM2e |

| Memory Bandwidth | 1.6 TB/s |

| FP16 Performance | 624 TFLOPS |

| BF16 Performance | 624 TFLOPS |

| FP32 Performance | 19.5 TFLOPS |

| INT8 Performance | 1,248 TOPS |

| TDP | 400W (SXM) / 250W (PCIe) |

| Form Factor | SXM4 / PCIe Gen4 |

| Interconnect | NVLink 3.0 (600 GB/s) |

| PCIe Interface | PCIe Gen4 x16 |

| Max GPUs per Server | 8 (HGX A100) / 4-8 (PCIe) |

Prices vary with supply and import costs. Contact for current India pricing.

Best For

Not Ideal For

- Large LLMs that need 80 GB VRAM (LLaMA 70B, Mixtral 8x7B)

- Workloads that are memory-bandwidth bound (80GB variant has 2.0 TB/s)

Overview

The NVIDIA A100 40GB is the original Ampere data center GPU, delivering the same 624 FP16 TFLOPS compute as the 80GB variant but with 40 GB of HBM2e and 1.6 TB/s memory bandwidth. For workloads that fit within the 40 GB memory envelope, performance is identical to the 80GB model.

This makes the A100 40GB an excellent value option for teams training models up to 13B parameters on a single GPU, or running distributed training across multiple GPUs for larger models. It is also well-suited for computer vision, diffusion models, and most inference workloads where model weights fit in 40 GB.

The A100 40GB is more readily available and more affordable than the 80GB variant. For organizations building GPU infrastructure on a budget, consider pairing multiple A100 40GB units with NVLink to aggregate memory across GPUs for larger models.

Get NVIDIA A100 40GB pricing for your setup

Tell us your workload and cluster size. We'll quote the complete solution including servers, networking, and colocation.