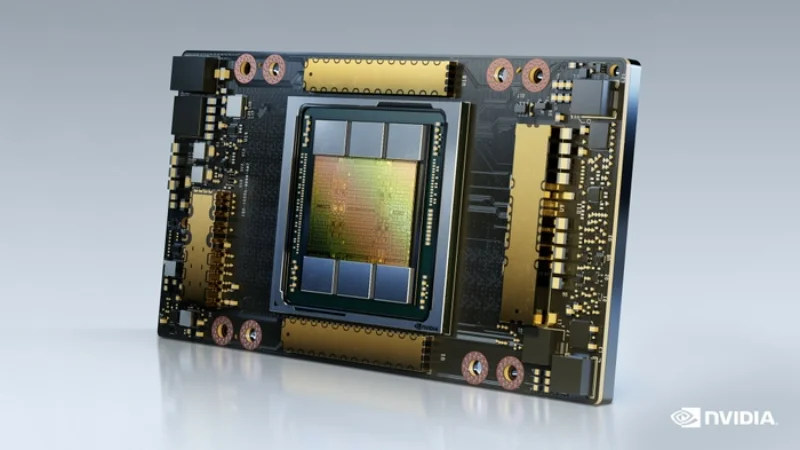

NVIDIA A30

Compact Ampere GPU for inference and mainstream AI workloads

VRAM

24 GB

Bandwidth

933 GB/s

FP16

165 TFLOPS

TDP

165W

Technical Specifications

| VRAM | 24 GB HBM2e |

| Memory Bandwidth | 933 GB/s |

| FP16 Performance | 165 TFLOPS |

| BF16 Performance | 165 TFLOPS |

| FP32 Performance | 10.3 TFLOPS |

| INT8 Performance | 330 TOPS |

| TDP | 165W |

| Form Factor | PCIe Gen4 Dual-Slot |

| PCIe Interface | PCIe Gen4 x16 |

| Max GPUs per Server | Up to 8 |

Prices vary with supply and import costs. Contact for current India pricing.

Best For

Not Ideal For

- Training large models (limited VRAM and compute vs A100)

- High-throughput LLM inference (L4 or L40S offer better value at this VRAM tier)

Overview

The NVIDIA A30 is a compact, power-efficient Ampere GPU designed for mainstream AI inference. With 24 GB of HBM2e memory and a 165W TDP, it offers a good balance of inference capability and power efficiency for dense deployments.

The A30 supports Multi-Instance GPU (MIG) technology, allowing a single GPU to be partitioned into up to four isolated instances. This makes it well-suited for multi-tenant cloud environments where different users or applications need dedicated GPU resources.

While the A30 has been largely superseded by the L4 for new inference deployments, it remains a capable option for organizations that prefer HBM memory (vs GDDR6 on the L4) or need MIG support. We supply both new and refurbished A30 units.

Get NVIDIA A30 pricing for your setup

Tell us your workload and cluster size. We'll quote the complete solution including servers, networking, and colocation.