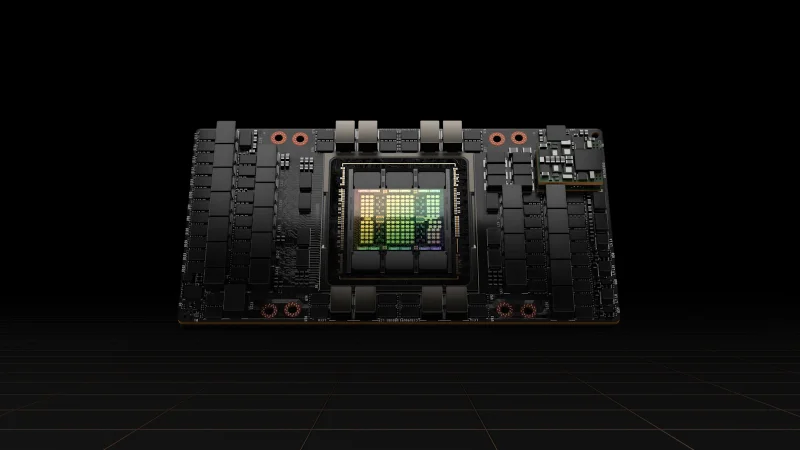

NVIDIA H100 SXM

The gold standard for LLM training and large-scale AI

VRAM

80 GB

Bandwidth

3.35 TB/s

FP16

989.4 TFLOPS

TDP

700W

Technical Specifications

| VRAM | 80 GB HBM3 |

| Memory Bandwidth | 3.35 TB/s |

| FP16 Performance | 989.4 TFLOPS |

| BF16 Performance | 989.4 TFLOPS |

| FP32 Performance | 66.9 TFLOPS |

| INT8 Performance | 1,979 TOPS |

| TDP | 700W |

| Form Factor | SXM5 |

| Interconnect | NVLink 4.0 (900 GB/s) |

| PCIe Interface | PCIe Gen5 x16 |

| Max GPUs per Server | 8 (HGX H100) |

Prices vary with supply and import costs. Contact for current India pricing.

Best For

Not Ideal For

- Budget-constrained inference (consider L4 or L40S for better cost per token)

- Desktop workstation use (SXM requires purpose-built server chassis)

Overview

The NVIDIA H100 SXM is the most sought-after data center GPU for AI training. Built on the Hopper architecture, it delivers 989 FP16 TFLOPS and features fourth-generation NVLink providing 900 GB/s GPU-to-GPU interconnect within an 8-GPU HGX baseboard.

For LLM training, the H100 SXM delivers roughly 3x the training throughput of an A100 on transformer-based models. Combined with InfiniBand NDR networking, 8-GPU nodes can scale linearly across hundreds of nodes for training frontier-class models.

The SXM form factor requires a compatible baseboard (HGX H100). We supply complete server solutions from Supermicro, Dell, and HPE that include the chassis, baseboard, CPUs, RAM, and networking pre-configured for your cluster size. All units come with GST invoicing and pan-India delivery.

Compare

NVIDIA H100 vs AMD Instinct MI300X

Compare NVIDIA H100 SXM and AMD Instinct MI300X GPUs for AI training and HPC. VRAM, bandwidth, performance, and ecosystem analysis for Indian enterprises.

View comparisonNVIDIA H100 vs L40S

Compare NVIDIA H100 and L40S GPUs for AI inference, training, and visual compute. Specs, performance, and pricing analysis for Indian data centres.

View comparisonNVIDIA H100 vs A100

Compare NVIDIA H100 SXM and A100 80 GB GPUs for AI training and inference workloads. Detailed specs, performance, and value analysis for Indian enterprises.

View comparisonGet NVIDIA H100 SXM pricing for your setup

Tell us your workload and cluster size. We'll quote the complete solution including servers, networking, and colocation.