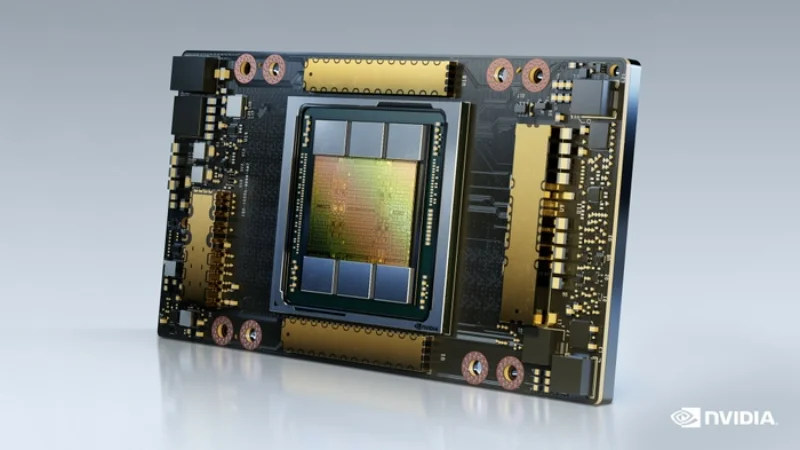

NVIDIA A100 80GB

The proven workhorse for AI training and inference

VRAM

80 GB

Bandwidth

2.0 TB/s

FP16

624 TFLOPS

TDP

400W (SXM) / 300W (PCIe)

Technical Specifications

| VRAM | 80 GB HBM2e |

| Memory Bandwidth | 2.0 TB/s |

| FP16 Performance | 624 TFLOPS |

| BF16 Performance | 624 TFLOPS |

| FP32 Performance | 19.5 TFLOPS |

| INT8 Performance | 1,248 TOPS |

| TDP | 400W (SXM) / 300W (PCIe) |

| Form Factor | SXM4 / PCIe Gen4 |

| Interconnect | NVLink 3.0 (600 GB/s) |

| PCIe Interface | PCIe Gen4 x16 |

| Max GPUs per Server | 8 (HGX A100) / 4-8 (PCIe) |

Prices vary with supply and import costs. Contact for current India pricing.

Best For

Not Ideal For

- Frontier-scale training (100B+ parameters) where H100 NVLink bandwidth matters

- Latency-sensitive single-request inference (newer GPUs are faster per query)

Overview

The NVIDIA A100 80GB is the most widely deployed data center GPU for AI. Built on the Ampere architecture, it delivers 624 FP16 TFLOPS with 80 GB of HBM2e memory and 2.0 TB/s bandwidth. Third-generation NVLink provides 600 GB/s GPU-to-GPU interconnect in multi-GPU configurations.

For many production AI workloads, the A100 80GB remains the best value proposition. It offers roughly 60-70% of the H100's training throughput at a significantly lower acquisition cost. For inference, the 80 GB VRAM comfortably handles LLaMA 70B quantized models and most production serving workloads.

Available in both SXM4 (for HGX baseboards) and PCIe form factors. We supply both new and certified refurbished A100 units with full warranty. For teams building their first GPU cluster, the A100 80GB offers the best balance of performance, ecosystem maturity, and cost.

Get NVIDIA A100 80GB pricing for your setup

Tell us your workload and cluster size. We'll quote the complete solution including servers, networking, and colocation.