Networking

Enterprise firewalls, managed switches, InfiniBand interconnects, PCIe NICs, and HBAs from top brands.

InfiniBand Networking

NVIDIA (Mellanox) · Quantum-2 (NDR) · Quantum (HDR)

InfiniBand is the interconnect of choice for GPU clusters and high-performance computing environments where ultra-low latency and massive bandwidth between nodes are critical for distributed training performance. NVIDIA's (formerly Mellanox) InfiniBand product line includes two active generations: HDR (High Data Rate) at 200 Gb/s per port and NDR (Next Data Rate) at 400 Gb/s per port. An NDR InfiniBand fabric built on Quantum-2 QM9700 switches and ConnectX-7 host channel adapters delivers 400 Gb/s per GPU node with sub-microsecond latency and hardware- offloaded RDMA, enabling NCCL-based all-reduce operations across multi-node H100 clusters at near-theoretical bandwidth. For AI training workloads, InfiniBand's adaptive routing, congestion control, and SHARP (Scalable Hierarchical Aggregation and Reduction Protocol) in-network computing dramatically reduce the time spent on gradient synchronisation during distributed training. RawCompute designs and deploys turnkey InfiniBand fabrics for AI clusters in India, including NDR and HDR switches (QM9700, QM8700), ConnectX-7 and ConnectX-6 Dx HCAs, active and passive copper cables, and single-mode fibre transceivers for longer inter-rack runs. We handle fabric topology design (fat-tree, rail-optimised), UFM (Unified Fabric Manager) setup, and NCCL performance benchmarking to ensure your cluster hits peak all-reduce throughput before production workloads begin.

Design Your InfiniBand Fabric

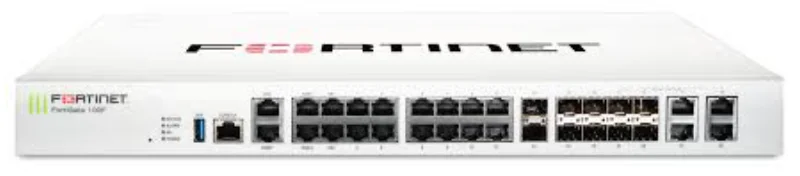

Firewalls & UTM Appliances

Fortinet · Sophos · Netgate (pfSense / TNSR) · Palo Alto Networks · WatchGuard

A properly sized firewall is the first line of defence for any data-centre or enterprise network. RawCompute supplies next-generation firewall (NGFW) and unified threat management (UTM) appliances from Fortinet FortiGate, Sophos XGS, and Netgate pfSense/TNSR platforms to match every throughput and feature requirement. Fortinet FortiGate appliances (e.g., FortiGate 600F, 1800F, 3700F) use purpose-built NP7 and CP9 ASICs to deliver line-rate threat inspection at up to 400 Gbps firewall throughput without the performance penalties of software-only solutions. Sophos XGS series appliances feature Xstream architecture with dedicated flow processors for TLS inspection, making them ideal for mid-market and SMB deployments where ease of management (Sophos Central cloud console) is a priority. For organisations that prefer open-source-based security, Netgate pfSense Plus and TNSR appliances offer stateful firewalling, VPN termination (IPsec/WireGuard/OpenVPN), and routing on certified hardware without recurring per-feature licensing. All firewalls we sell include initial configuration assistance, HA pair setup, and integration with your existing SIEM or log management platform.

Get Firewall Recommendations

Managed Ethernet Switches

Arista · Cisco · Juniper · FS.com · Dell

Managed Ethernet switches form the backbone of every data-centre and enterprise LAN, providing VLAN segmentation, link aggregation, quality of service, and telemetry at speeds from 1 GbE through 10/25 GbE at the access layer up to 100/400 GbE at the spine and core. RawCompute supplies switches across every tier of the network fabric. For top-of-rack (ToR) deployments, we recommend the Arista 7050X4 series (48 x 25 GbE SFP28 + 8 x 100 GbE QSFP28) or the Cisco Nexus 93180YC-FX3 for shops standardised on NX-OS. At the spine layer, the Arista 7060X5 (32 x 400 GbE QSFP-DD) or Juniper QFX5220 provide non-blocking leaf-spine fabrics for east-west traffic in large compute or GPU clusters. For cost-sensitive deployments, FS.com S5860 and N8560 series switches deliver 10/25/100 GbE with open networking OS support (Cumulus Linux, SONiC) at a fraction of brand-name pricing. Every switch we sell is configured with your VLAN layout, spanning-tree or EVPN-VXLAN settings, and monitoring (SNMP, Streaming Telemetry, sFlow) before shipment. We also stock SFP28, SFP+, QSFP28, and QSFP-DD optics and DAC cables from Intel, Mellanox, and FS.com.

Find the Right Switch

HBAs & Fibre Channel Adapters

Broadcom (Emulex) · Marvell (QLogic) · Broadcom (LSI / Avago)

Host bus adapters (HBAs) provide the high-speed interface between a server and its external storage subsystem, whether that is a SAN fabric over Fibre Channel or a direct-attached SAS/SATA JBOD enclosure. RawCompute supplies two primary categories of HBAs. For Fibre Channel SAN connectivity, the Broadcom (Emulex) LPe36002 (64G FC dual-port) and Marvell (QLogic) QLE2772 (32G FC dual-port) are industry-standard adapters that connect servers to enterprise SAN arrays from NetApp, Pure Storage, Dell PowerStore, and HPE Alletra. These FC HBAs support N_Port ID Virtualisation (NPIV) for multi-tenant SAN access, hardware-offloaded FCoE, and end-to-end data integrity (T10 DIF/DIX) to protect against silent data corruption. For direct-attached storage and JBOD expansion, the Broadcom 9500-16i (16 internal SAS/SATA/NVMe ports) and 9500-8e (8 external SAS ports) HBAs operating in IT mode (pass-through, no RAID) are the go-to choice for ZFS, Ceph, and TrueNAS deployments where the software layer handles all data protection. These tri-mode HBAs support SAS 4.0 (24 Gb/s), SATA III (6 Gb/s), and NVMe over a single controller, allowing mixed-drive JBODs with a single adapter. All HBAs we sell include the correct low-profile and full-height brackets, pre-flashed to the latest firmware, and tested with your target drive enclosure where possible.

Find the Right HBA

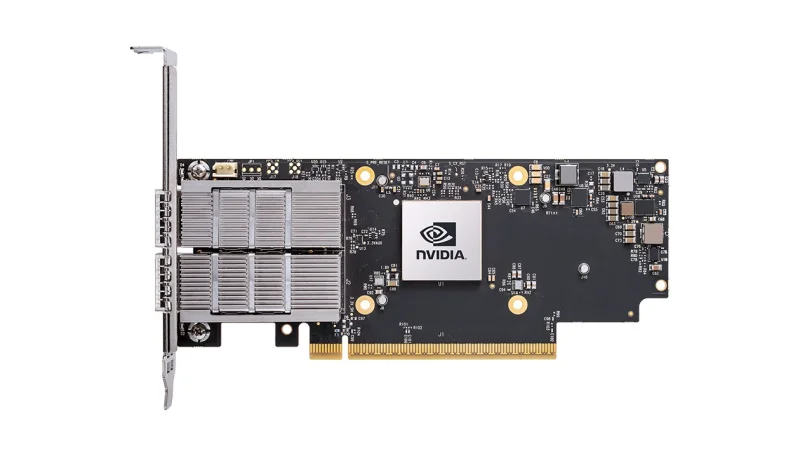

PCIe Network Interface Cards

NVIDIA (Mellanox) · Intel · Broadcom

PCIe network interface cards (NICs) are the server's gateway to the data-centre network fabric, and choosing the right NIC is essential for matching your server's I/O capability to your network's speed tier. RawCompute supplies PCIe NICs across the full performance spectrum. The NVIDIA ConnectX-7 is our top recommendation for 100/200/400 GbE Ethernet or InfiniBand deployments -- it supports hardware-offloaded RoCE v2, GPUDirect RDMA for direct GPU-to- network data transfers (bypassing the CPU entirely), and in-hardware IPsec and TLS acceleration. The ConnectX-6 Dx remains the sweet spot for 25/100 GbE Ethernet with SR-IOV virtualisation offload, supporting up to 1,024 virtual functions per port for dense containerised and VM-based workloads. For Intel-based environments, the Intel E810-CQDA2 (dual 100 GbE QSFP28) integrates with Intel's ADQ (Application Device Queues) technology for application-aware traffic steering, and the Intel X710-DA2 (dual 10 GbE SFP+) remains the workhorse NIC for standard enterprise 10 GbE connectivity. Broadcom BCM57508 (P2100G) NetXtreme cards are a cost-effective alternative for 100 GbE with OCP 3.0 mezzanine form factors. We stock all major NIC form factors (PCIe full-height, low-profile, OCP 3.0) and can preconfigure them with firmware updates and driver packages for your target operating system.

Shop PCIe NICsBuilding a multi-GPU cluster?

InfiniBand interconnect is non-negotiable for multi-node training. NVIDIA HDR (200Gb/s) and NDR (400Gb/s) fabrics provide the bandwidth needed for distributed LLM training. Talk to us about your cluster topology.

Enquire about InfiniBandNeed networking gear for your setup?

Tell us your requirements, we'll recommend and quote.