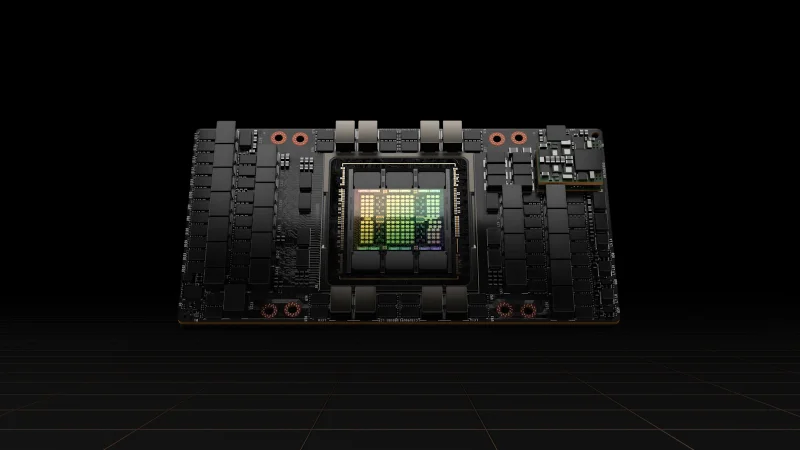

NVIDIA H100 PCIe

Hopper performance in a standard PCIe form factor

VRAM

80 GB

Bandwidth

2.0 TB/s

FP16

756.5 TFLOPS

TDP

350W

Technical Specifications

| VRAM | 80 GB HBM3 |

| Memory Bandwidth | 2.0 TB/s |

| FP16 Performance | 756.5 TFLOPS |

| BF16 Performance | 756.5 TFLOPS |

| FP32 Performance | 51.2 TFLOPS |

| INT8 Performance | 1,513 TOPS |

| TDP | 350W |

| Form Factor | PCIe Gen5 Dual-Slot |

| Interconnect | NVLink Bridge (600 GB/s, 2-GPU pairs) |

| PCIe Interface | PCIe Gen5 x16 |

| Max GPUs per Server | 4-8 (chassis dependent) |

Prices vary with supply and import costs. Contact for current India pricing.

Best For

Not Ideal For

- Maximum-throughput multi-GPU training (SXM version has 900 GB/s NVLink vs 600 GB/s)

- Workloads requiring more than 2-GPU NVLink connectivity

Overview

The NVIDIA H100 PCIe brings Hopper-generation performance to standard PCIe server chassis. While it trades some peak throughput compared to the SXM variant (756 vs 989 FP16 TFLOPS) and has lower memory bandwidth (2.0 vs 3.35 TB/s), it offers a significantly lower TDP of 350W and compatibility with a wider range of servers.

The PCIe form factor supports NVLink Bridge connections between pairs of GPUs, making 2-GPU configurations highly efficient for inference and fine-tuning. For teams upgrading from A100 PCIe deployments, the H100 PCIe is a direct drop-in replacement in most chassis.

This is a strong choice for inference deployments, managed GPU cloud platforms, and organizations that need H100-class performance without the complexity and cost of a full HGX baseboard setup.

Compare

NVIDIA H100 vs L40S

Compare NVIDIA H100 and L40S GPUs for AI inference, training, and visual compute. Specs, performance, and pricing analysis for Indian data centres.

View comparisonNVIDIA H100 vs A100

Compare NVIDIA H100 SXM and A100 80 GB GPUs for AI training and inference workloads. Detailed specs, performance, and value analysis for Indian enterprises.

View comparisonGet NVIDIA H100 PCIe pricing for your setup

Tell us your workload and cluster size. We'll quote the complete solution including servers, networking, and colocation.