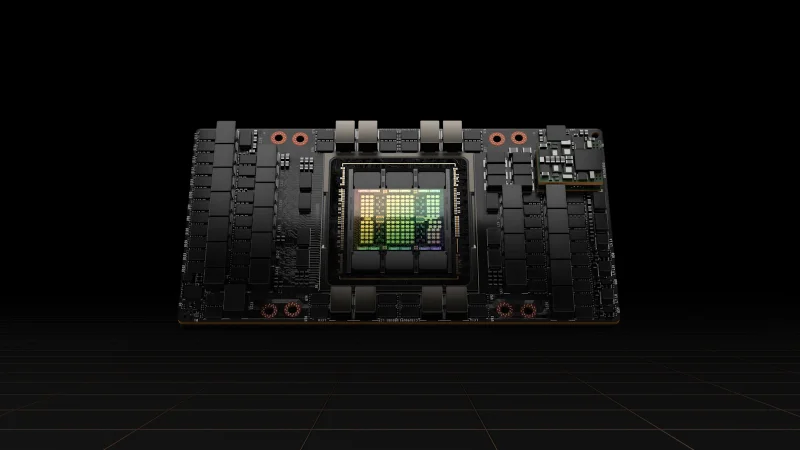

NVIDIA H200

Next-gen Hopper with 141 GB HBM3e for the largest AI models

VRAM

141 GB

Bandwidth

4.8 TB/s

FP16

989.4 TFLOPS

TDP

700W

Technical Specifications

| VRAM | 141 GB HBM3e |

| Memory Bandwidth | 4.8 TB/s |

| FP16 Performance | 989.4 TFLOPS |

| BF16 Performance | 989.4 TFLOPS |

| FP32 Performance | 66.9 TFLOPS |

| INT8 Performance | 1,979 TOPS |

| TDP | 700W |

| Form Factor | SXM5 |

| Interconnect | NVLink 4.0 (900 GB/s) |

| PCIe Interface | PCIe Gen5 x16 |

| Max GPUs per Server | 8 (HGX H200) |

Prices vary with supply and import costs. Contact for current India pricing.

Best For

Not Ideal For

- Inference-only workloads where L40S or L4 offer better cost per token

- Budget-limited deployments (H100 is more available and less expensive)

Overview

The NVIDIA H200 is the memory-upgraded variant of the H100, sharing the same Hopper GPU architecture but featuring 141 GB of HBM3e memory with 4.8 TB/s bandwidth. This 76% increase in memory capacity and 43% increase in bandwidth over the H100 makes it the ideal GPU for workloads that are memory-bound.

For LLM inference, NVIDIA reports the H200 delivers up to 1.9x the performance of an H100 on models like LLaMA2 70B. For training, the extra VRAM allows larger batch sizes and eliminates the need for model parallelism in many scenarios where H100 would require tensor splitting.

Availability in India is limited. We maintain allocation priority with select OEM partners. If you are planning a multi-node H200 cluster, contact us early to secure supply.

Get NVIDIA H200 pricing for your setup

Tell us your workload and cluster size. We'll quote the complete solution including servers, networking, and colocation.